Best-Worst Scaling

Efficient comparative annotation with Best-Worst Scaling in Potato — automatically generates comparison tuples and converts selections to continuous quality scores.

New in v2.3.0

Best-Worst Scaling (BWS), also known as Maximum Difference Scaling (MaxDiff), is a comparative annotation method where annotators are shown a tuple of items (typically 4) and asked to select the best and worst item according to some criterion. BWS produces reliable scalar scores from simple binary judgments, requiring far fewer annotations than direct rating scales to achieve the same statistical power.

BWS is especially useful when:

- Direct numerical ratings suffer from annotator bias (scale usage varies between people)

- You need a reliable ranking of hundreds or thousands of items

- The quality dimension is inherently relative (e.g., "which translation is most fluent?")

- You want to maximize information per annotation (each BWS judgment provides more bits than a Likert rating)

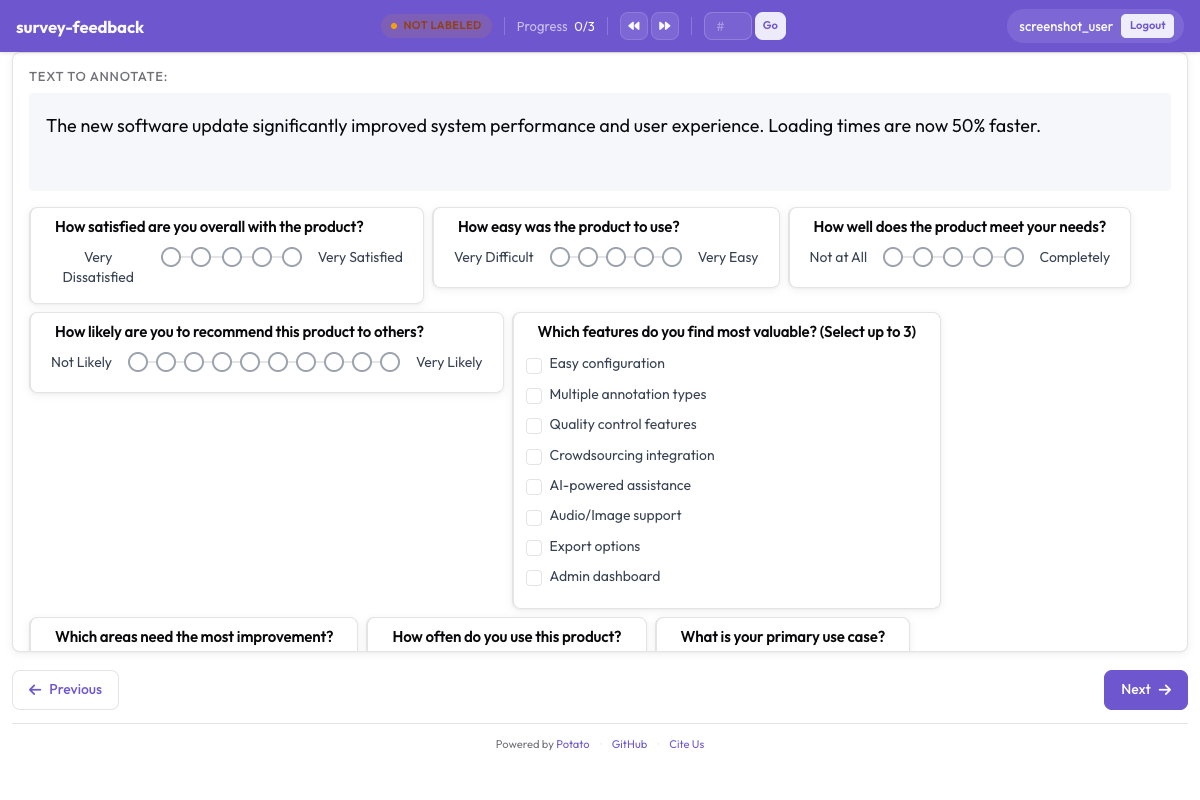

Best-worst scaling interface for comparative annotation in Potato

Best-worst scaling interface for comparative annotation in Potato

Basic Configuration

annotation_schemes:

- annotation_type: best_worst_scaling

name: fluency

description: "Select the BEST and WORST translation by fluency"

# Items to compare

items_key: "translations" # key in instance data containing the list of items

# Tuple size (how many items shown at once)

tuple_size: 4 # typically 4; valid range is 3-8

# Labels for best/worst buttons

best_label: "Most Fluent"

worst_label: "Least Fluent"

# Display options

show_item_labels: true # show "A", "B", "C", "D" labels

randomize_order: true # randomize item order within each tuple

show_source: false # optionally show which system produced each item

# Validation

label_requirement:

required: true # must select both best and worstData Format

Each instance in your data file should contain a list of items to compare. Potato generates tuples from this list automatically.

Option 1: All Items in One Instance

If you have a single set of items to rank (e.g., translations of one sentence):

{

"id": "sent_001",

"source": "The cat sat on the mat.",

"translations": [

{"id": "sys_a", "text": "Le chat s'est assis sur le tapis."},

{"id": "sys_b", "text": "Le chat a assis sur le tapis."},

{"id": "sys_c", "text": "Le chat etait assis sur le mat."},

{"id": "sys_d", "text": "Le chat se tenait sur le tapis."}

]

}Option 2: Pre-Generated Tuples

If you want full control over which items appear together, provide pre-generated tuples:

{

"id": "tuple_001",

"translations": [

{"id": "sys_a", "text": "Le chat s'est assis sur le tapis."},

{"id": "sys_b", "text": "Le chat a assis sur le tapis."},

{"id": "sys_c", "text": "Le chat etait assis sur le mat."},

{"id": "sys_d", "text": "Le chat se tenait sur le tapis."}

]

}Automatic Tuple Generation

When your items list is longer than the tuple size, Potato automatically generates tuples. The generation algorithm ensures:

- Every item appears in roughly the same number of tuples

- Every pair of items co-occurs in at least one tuple (for reliable relative scoring)

- Tuples are balanced so that no item is always shown first or last

Configure tuple generation:

annotation_schemes:

- annotation_type: best_worst_scaling

name: fluency

items_key: "translations"

tuple_size: 4

tuple_generation:

method: balanced_incomplete # balanced_incomplete or random

tuples_per_item: 5 # each item appears in ~5 tuples

seed: 42 # for reproducibility

ensure_pair_coverage: true # every pair co-occurs at least onceFor a set of N items with tuple size T and tuples_per_item = K, Potato generates approximately N * K / T total tuples.

Generation Methods

balanced_incomplete (default): Uses a balanced incomplete block design to maximize statistical efficiency. Every item appears equally often, and pair co-occurrence is as uniform as possible. Recommended for most use cases.

random: Randomly samples tuples with replacement. Faster for very large item sets (N > 10,000) but less statistically efficient. Use when exact balance is not critical.

Pre-Generating Tuples via CLI

For large-scale projects, generate tuples ahead of time:

python -m potato.bws generate-tuples \

--items data/items.jsonl \

--tuple-size 4 \

--tuples-per-item 5 \

--output data/tuples.jsonl \

--seed 42Scoring Methods

After annotation, Potato computes item scores from BWS judgments using three methods.

1. Counting (Default)

The simplest method. Each item's score is the proportion of times it was selected as "best" minus the proportion of times it was selected as "worst":

Score(item) = (best_count - worst_count) / total_appearances

Scores range from -1.0 (always worst) to +1.0 (always best).

python -m potato.bws score \

--config config.yaml \

--method counting \

--output scores.csv2. Bradley-Terry

Fits a Bradley-Terry model to the pairwise comparisons implied by BWS judgments. Each "best" selection implies the best item is preferred over all other items in the tuple; each "worst" selection implies all other items are preferred over the worst.

Bradley-Terry produces scores on a log-odds scale with better statistical properties than counting, especially with sparse data.

python -m potato.bws score \

--config config.yaml \

--method bradley_terry \

--max-iter 1000 \

--tolerance 1e-6 \

--output scores.csv3. Plackett-Luce

A generalization of Bradley-Terry that models the full ranking implied by each tuple judgment (best > middle items > worst). Plackett-Luce extracts more information from each annotation than Bradley-Terry.

python -m potato.bws score \

--config config.yaml \

--method plackett_luce \

--output scores.csvComparing Scoring Methods

| Method | Speed | Data Efficiency | Handles Sparse Data | Statistical Model |

|---|---|---|---|---|

| Counting | Fast | Low | Yes | None (descriptive) |

| Bradley-Terry | Medium | Medium | Moderate | Pairwise comparison |

| Plackett-Luce | Slower | High | Moderate | Full ranking |

For most projects, Bradley-Terry is the best default. Use Counting for quick exploratory analysis and Plackett-Luce when you need maximum statistical efficiency from limited annotations.

Scoring Configuration in YAML

You can also configure scoring directly in the project config for automatic computation:

annotation_schemes:

- annotation_type: best_worst_scaling

name: fluency

items_key: "translations"

tuple_size: 4

scoring:

method: bradley_terry

auto_compute: true # compute scores after each annotation session

output_file: "output/fluency_scores.csv"

include_confidence: true # include confidence intervals

bootstrap_iterations: 1000 # for confidence interval estimationAdmin Dashboard Integration

The admin dashboard includes a dedicated BWS tab showing:

- Score distribution: Histogram of current item scores

- Annotation progress: How many tuples have been annotated vs. total

- Per-item coverage: How many times each item has been seen

- Inter-annotator consistency: Split-half reliability of BWS scores

- Score convergence: Line chart showing how scores stabilize as more annotations are collected

Access BWS analytics from the command line:

python -m potato.bws stats --config config.yamlBWS Statistics

==============

Schema: fluency

Items: 200

Tuples: 250 (annotated: 180 / 250)

Annotations: 540 (3 annotators)

Score Summary (Bradley-Terry):

Mean: 0.02

Std: 0.43

Range: -0.91 to +0.87

Top 5 Items:

sys_d: 0.87 (±0.08)

sys_a: 0.72 (±0.09)

sys_f: 0.65 (±0.10)

sys_b: 0.51 (±0.11)

sys_k: 0.48 (±0.09)

Split-Half Reliability: r = 0.94

Multiple BWS Dimensions

You can run multiple BWS schemas on the same set of items to evaluate different quality dimensions:

annotation_schemes:

- annotation_type: best_worst_scaling

name: fluency

description: "Select BEST and WORST by fluency"

items_key: "translations"

tuple_size: 4

best_label: "Most Fluent"

worst_label: "Least Fluent"

- annotation_type: best_worst_scaling

name: adequacy

description: "Select BEST and WORST by meaning preservation"

items_key: "translations"

tuple_size: 4

best_label: "Most Accurate"

worst_label: "Least Accurate"Both schemas share the same tuples (Potato generates one set of tuples per items_key), so annotators see each tuple once but provide two judgments.

Output Format

BWS annotations are saved per-tuple:

{

"id": "tuple_001",

"annotations": {

"fluency": {

"best": "sys_d",

"worst": "sys_c"

},

"adequacy": {

"best": "sys_a",

"worst": "sys_c"

}

},

"annotator": "user_1",

"timestamp": "2026-03-01T14:22:00Z"

}Full Example

Complete configuration for evaluating machine translation systems:

annotation_task_name: "MT System Ranking (BWS)"

task_dir: "."

data_files:

- "data/mt_tuples.jsonl"

item_properties:

id_key: id

text_key: source

instance_display:

fields:

- key: source

type: text

display_options:

label: "Source Sentence"

annotation_schemes:

- annotation_type: best_worst_scaling

name: overall_quality

description: "Select the BEST and WORST translation"

items_key: "translations"

tuple_size: 4

best_label: "Best Translation"

worst_label: "Worst Translation"

randomize_order: true

show_item_labels: true

tuple_generation:

method: balanced_incomplete

tuples_per_item: 5

seed: 42

scoring:

method: bradley_terry

auto_compute: true

output_file: "output/quality_scores.csv"

include_confidence: true

output_annotation_dir: "output/"

export_annotation_format: "jsonl"Further Reading

- Pairwise Comparison -- simpler two-item comparison

- Likert Scales -- direct rating alternative

- Multirate -- multi-dimensional direct ratings

- Export Formats -- export BWS data for analysis

For implementation details, see the source documentation.