Potato vs LangSmith and Langfuse for Agent Evaluation: A Practical Comparison

Compare Potato with LangSmith and Langfuse for AI agent evaluation across trace rendering, annotation, coding agent support, live observation, pricing, and self-hosting.

Three Tools, Three Philosophies

Potato, LangSmith, and Langfuse all touch agent evaluation, but they come at it from fundamentally different directions:

- Potato is annotation-first. It was built for collecting structured human judgments on AI outputs, then extended to support agent traces, coding diffs, and live observation. Its core strength is the depth and configurability of its annotation schemas.

- LangSmith is observability-first. It was built to instrument, trace, and debug LangChain applications in production. Its annotation features were added later to support evaluation workflows within the LangChain ecosystem.

- Langfuse is open-source observability. It covers tracing, prompt management, and evaluation for any LLM application. Its annotation features are functional but secondary to its monitoring capabilities.

None of these tools is universally "better." The right choice depends on whether your primary need is annotation, observability, or both, and whether you are operating within a specific framework ecosystem.

Feature Comparison

The following table compares capabilities relevant to AI agent evaluation. We also include Label Studio, Argilla, and Scale AI for broader context, since teams often evaluate these alternatives as well.

| Feature | Potato | LangSmith | Langfuse | Label Studio | Argilla | Scale AI |

|---|---|---|---|---|---|---|

| Primary purpose | Annotation | Observability | Observability | Annotation | Annotation | Annotation |

| Trace format support | 13 formats | LangChain native | Langfuse SDK | None | None | Custom |

| Per-step annotation | Full (trajectory_eval) | Limited (score per run) | Limited (score per trace) | No | No | Custom |

| Real-time agent observation | Yes | No | No | No | No | No |

| Agent pause/resume/takeover | Yes | No | No | No | No | No |

| Code diff rendering | Yes (unified diff, syntax highlighting) | No | No | No | No | No |

| Terminal output rendering | Yes (ANSI color support) | No | Partial | No | No | No |

| PRM data collection | Yes (step-level correctness labels) | No | No | No | No | No |

| Code review with inline comments | Yes (line-level, categorized) | No | No | No | No | No |

| Pairwise comparison | 3 modes (side-by-side, sequential, blind) | Limited (1 mode) | No | No | Yes (1 mode) | Yes (1 mode) |

| Multi-criteria rubric | Yes (configurable dimensions, scales) | No | No | No | Partial | Yes |

| Error taxonomy | Hierarchical, configurable | No | No | No | No | Custom |

| Severity scoring | Yes (weighted, running score) | No | Numeric scores only | No | No | Custom |

| Self-hosted | Yes (fully) | No (cloud only) | Yes (open source) | Yes (open source) | Yes (open source) | No (managed) |

| Free | Yes (fully open source) | No (free tier limited) | Partial (open source core) | Partial (open source core) | Yes (open source) | No |

| Framework lock-in | None | LangChain ecosystem | None | None | None | None |

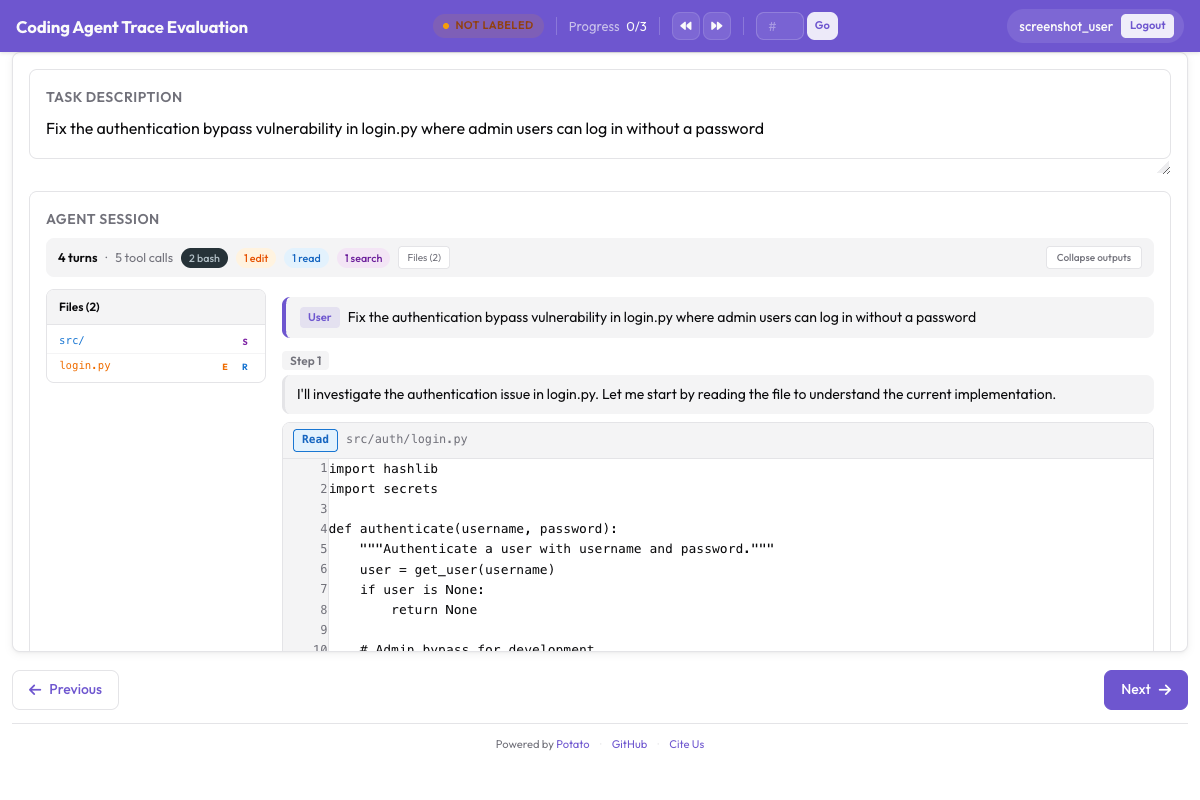

Potato renders coding agent traces with proper diff formatting -- a capability no competitor offers:

Potato's CodingTraceDisplay with unified diff rendering, syntax highlighting, and file tree

Potato's CodingTraceDisplay with unified diff rendering, syntax highlighting, and file tree

Trace Format Support in Detail

One of the biggest practical differences is how many agent trace formats each tool supports natively.

Potato includes converters for 13 formats:

# See all available converters

python -m potato.convert_traces --list-formats

# Available formats:

# react - ReAct-style (Thought/Action/Observation)

# openai - OpenAI function calling / tool use

# anthropic - Anthropic Messages API with tool_use

# langchain - LangChain run traces

# langfuse - Langfuse trace exports

# langsmith - LangSmith dataset exports

# autogen - Microsoft AutoGen conversations

# crewai - CrewAI task outputs

# swe_agent - SWE-Agent trajectories

# claude_code - Claude Code session logs

# aider - Aider chat histories

# webarena - WebArena episode traces

# custom - User-defined format with mapping configLangSmith works natively with LangChain traces. If your agent is built with LangChain or LangGraph, traces flow in automatically. If not, you need to manually instrument your code with the LangSmith SDK or convert your traces to LangSmith's format.

Langfuse works natively with traces instrumented via the Langfuse SDK. It supports Python, JavaScript, and has integrations with LangChain, LlamaIndex, and OpenAI. Like LangSmith, it requires instrumentation at the code level rather than post-hoc trace conversion.

Importing LangSmith or Langfuse Traces into Potato

If you already have traces in LangSmith or Langfuse and want to use Potato's annotation features, the converters handle this:

# Export from LangSmith and convert

python -m potato.convert_traces \

--input langsmith_export.jsonl \

--output data/traces.jsonl \

--format langsmith

# Export from Langfuse and convert

python -m potato.convert_traces \

--input langfuse_traces.json \

--output data/traces.jsonl \

--format langfuseThis means you can use LangSmith or Langfuse for production monitoring and Potato for deep human evaluation, getting the best of both worlds.

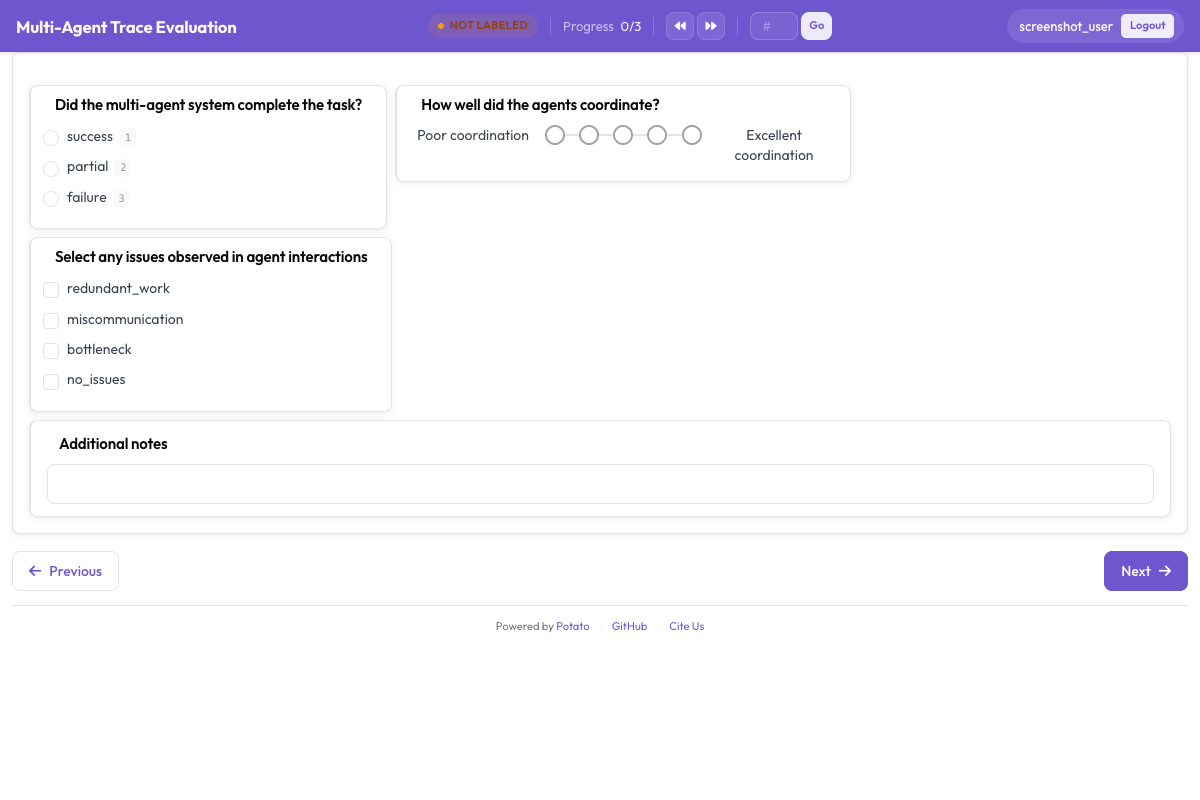

Per-Step Annotation: Where the Gap Is Widest

The most significant capability difference is in per-step annotation depth.

Potato provides the trajectory_eval schema with:

- Step-level correctness labels (correct/incorrect)

- Hierarchical error taxonomy selection

- Severity levels with configurable weights

- Running score that updates per step

- Free-text rationale per step

- All of this is configurable via YAML

# Potato: rich per-step annotation

annotation_schemes:

- annotation_type: "trajectory_eval"

name: "step_eval"

step_correctness:

labels: ["correct", "incorrect"]

error_taxonomy:

- category: "reasoning"

types:

- name: "logical_error"

- name: "incorrect_assumption"

- category: "action"

types:

- name: "wrong_tool"

- name: "wrong_arguments"

severity_levels:

- name: "minor"

weight: -1

- name: "major"

weight: -5

- name: "critical"

weight: -10

running_score:

initial: 100LangSmith allows you to attach a numeric score or a categorical label to individual runs within a trace. This is useful for basic quality tracking but does not support error taxonomies, severity weighting, or running scores. There is no built-in per-step review interface; you score runs from the trace detail view.

Langfuse supports scoring at the trace level and at the observation (span) level. You can attach numeric or categorical scores. Like LangSmith, there is no structured error taxonomy or severity system. Scores are flat key-value pairs.

For teams that need detailed per-step evaluation, such as building PRM training data or diagnosing agent failure modes, Potato is the only option among these three that provides purpose-built tooling.

Coding Agent Support

Evaluating coding agents (Claude Code, Aider, SWE-Agent, Devin, OpenHands) requires rendering code diffs and terminal output in a way that makes review efficient. This is an area where Potato has unique capabilities.

Potato renders:

- Unified diffs with red/green highlighting and syntax highlighting

- Terminal output with ANSI color code support

- Inline diff comments anchored to specific lines

- File-level quality ratings

- PR-style approve/request-changes verdicts

LangSmith displays tool call inputs and outputs as formatted JSON. Code diffs appear as raw text strings within tool outputs. There is no diff rendering, syntax highlighting, or inline commenting.

Langfuse similarly displays trace data as structured JSON. Code content appears as plain text. No specialized rendering for diffs or terminal output.

If you are evaluating coding agents, the difference in reviewer experience is substantial. Reviewing a 50-line diff in a syntax-highlighted unified diff view with inline commenting takes a fraction of the time it takes to review the same diff as a JSON string.

Web agent traces include screenshots with SVG overlays and filmstrip navigation:

Web agent trace viewer showing screenshots with SVG action overlays and filmstrip navigation

Web agent trace viewer showing screenshots with SVG action overlays and filmstrip navigation

Live Agent Observation

Potato supports real-time observation of running agents, which is a capability neither LangSmith nor Langfuse offer in their annotation workflows.

With Potato's live observation mode, an annotator can:

- Watch the agent work in real time, seeing each step as it happens

- Pause the agent at any point to review its current state

- Resume the agent after review

- Take over the agent's session to correct course or complete the task manually

This is particularly useful for:

- Safety evaluation: stop an agent before it takes a destructive action

- Training data: record human corrections as demonstration data

- Interactive evaluation: test how agents respond to mid-stream interventions

# Enable live observation in Potato config

live_observation:

enabled: true

agent_endpoint: "http://localhost:5000/agent"

allow_pause: true

allow_takeover: true

auto_pause_on:

- action_type: "delete"

- action_type: "execute"

- confidence_below: 0.3LangSmith and Langfuse are designed for post-hoc analysis of completed traces, not real-time interaction with running agents.

Pairwise Comparison

Comparing two agent outputs side-by-side is a core evaluation pattern, especially for A/B testing model versions or comparing agent architectures.

Potato offers three comparison modes:

- Side-by-side: Both traces visible simultaneously, synced scrolling

- Sequential: View trace A first, then trace B, then judge

- Blind: Traces are randomly labeled "A" and "B" with no model attribution

annotation_schemes:

- annotation_type: "pairwise"

name: "comparison"

mode: "side_by_side" # or "sequential" or "blind"

labels:

- name: "A is better"

- name: "B is better"

- name: "Tie"

criteria:

- "Which agent completed the task more efficiently?"

- "Which agent's output is higher quality?"LangSmith supports basic pairwise comparison through its "comparison view" in evaluation experiments. You can compare runs across different model versions, but the interface is designed for side-by-side run inspection rather than structured preference annotation.

Langfuse does not have a built-in pairwise comparison feature for annotation.

When to Use Each Tool

Use Potato when:

- Annotation is your primary goal: You need structured human judgments, not just monitoring

- You need coding agent support: Diff rendering, inline comments, terminal output display

- You are collecting PRM training data: Step-level correctness labels with running scores

- You need self-hosting: Data stays on your infrastructure, no cloud dependency

- You work with multiple agent frameworks: 13 trace format converters vs. single-framework lock-in

- You need rich evaluation schemas: Trajectory eval, rubric eval, code review, pairwise comparison

- Budget is a constraint: Fully free and open source

Use LangSmith when:

- You are already using LangChain/LangGraph: Traces flow in automatically with zero configuration

- Production monitoring is your primary need: Real-time tracing, latency tracking, cost monitoring

- Annotation is secondary: You need basic scoring and feedback, not deep evaluation schemas

- You want a managed service: No infrastructure to maintain

- Your team is small: The free tier may be sufficient for light evaluation

Use Langfuse when:

- You need open-source observability: Self-hosted monitoring and tracing

- You use multiple LLM providers: Good integrations across OpenAI, Anthropic, LangChain, LlamaIndex

- Annotation is not your primary use case: Scoring is available but not the focus

- You want prompt management alongside tracing: Langfuse bundles prompt versioning with observability

- You need a free alternative to LangSmith for monitoring: Langfuse's open-source core covers most monitoring needs

The Complementary Approach: Observability + Annotation

For many teams, the best setup is to use an observability tool (LangSmith or Langfuse) for production monitoring and Potato for deep human evaluation. The workflow looks like this:

- Instrument your agent with LangSmith or Langfuse for production tracing

- Sample traces that need human review (failures, edge cases, random samples)

- Export those traces from your observability tool

- Convert and import into Potato using the built-in converters

- Run human evaluation with Potato's rich annotation schemas

- Feed results back into your development cycle

# Example: sample 100 failed traces from Langfuse and import to Potato

python -m potato.convert_traces \

--input langfuse_failed_traces.json \

--output data/traces_to_review.jsonl \

--format langfuse \

--sample 100This gives you the real-time visibility of an observability platform with the annotation depth of a purpose-built annotation tool.

Key Differentiators Summary

Potato is the only free, self-hosted tool that combines:

- Coding agent diff rendering with syntax highlighting and inline comments

- Process reward model (PRM) data collection with step-level correctness and severity labels

- Live coding agent observation with pause, resume, and takeover capabilities

- 13-format trace conversion for agents built with any framework

- Hierarchical error taxonomies with severity-weighted running scores

- Three pairwise comparison modes (side-by-side, sequential, blind)

- Multi-criteria rubric evaluation with configurable dimensions and scales

- GitHub PR-style code review with categorized inline comments and verdicts

No other tool in the current landscape, whether open source or commercial, provides this combination. LangSmith and Langfuse are excellent observability tools, but they are not annotation tools. Label Studio and Argilla are general annotation tools, but they have no agent-specific features. Scale AI provides managed annotation services, but at a cost and without the self-hosting and customization that research teams need.

If your goal is to deeply understand how and why your agents succeed or fail, and to collect the structured training data needed to improve them, Potato fills a gap that no other tool currently addresses.

Migration Paths

From LangSmith to Potato (for annotation)

# 1. Export your dataset from LangSmith

langsmith export dataset my_eval_dataset -o langsmith_data.jsonl

# 2. Convert to Potato format

python -m potato.convert_traces \

--input langsmith_data.jsonl \

--output data/traces.jsonl \

--format langsmith

# 3. Create your Potato config and start annotating

potato start config.yaml -p 8000From Langfuse to Potato (for annotation)

# 1. Export traces from Langfuse via API

import requests

response = requests.get(

"https://cloud.langfuse.com/api/public/traces",

headers={"Authorization": "Bearer your_api_key"},

params={"limit": 500}

)

with open("langfuse_traces.json", "w") as f:

json.dump(response.json()["data"], f)# 2. Convert to Potato format

python -m potato.convert_traces \

--input langfuse_traces.json \

--output data/traces.jsonl \

--format langfuse

# 3. Start annotating

potato start config.yaml -p 8000From Label Studio or Argilla (for agent evaluation)

If you are currently using Label Studio or Argilla for general annotation and want to add agent evaluation capabilities, Potato can run alongside your existing tool. Use Label Studio or Argilla for your non-agent annotation tasks (NER, classification, etc.) and Potato for agent-specific evaluation that requires trace rendering, per-step annotation, and code review.

Conclusion

Choosing between Potato, LangSmith, and Langfuse is not a matter of which is "best" in the abstract. It depends on your primary need:

- If you need to monitor agents in production, use LangSmith or Langfuse.

- If you need to deeply evaluate agent behavior with structured human annotation, use Potato.

- If you need both, use them together. They are complementary, not competitive.

The key question to ask is: "Is my primary goal to observe what agents do, or to evaluate how well they do it?" If the answer is evaluate, Potato is purpose-built for that job.