Watch, Pause, and Rewind: Live Coding Agent Observation in Potato

Tutorial for setting up live coding agent observation with Ollama, Anthropic API, or Claude Agent SDK. Includes pause, rollback, branching, and trajectory export.

What Makes Live Observation Different

Most coding agent evaluation happens after the fact: an agent runs, produces a trace, and human reviewers analyze the recording. Live observation flips this model. Instead of reviewing a completed trajectory, annotators watch the agent work in real time, seeing each file edit, terminal command, and reasoning step as it happens.

This approach offers several advantages over static trace review. Annotators can intervene when they spot a problem, preventing the agent from going further down a wrong path. They can pause the agent to examine a diff more carefully before it moves on. They can send natural language instructions to redirect the agent. And critically, they can rollback to any previous checkpoint and let the agent try a different approach, producing branching trajectories that are invaluable for preference learning.

Live observation is not a replacement for static trace annotation. It is a complementary mode that produces different kinds of training data. Static annotation is better for large-scale data collection at fixed cost. Live observation is better for targeted data collection, understanding agent failure modes, and generating high-quality branching preference data.

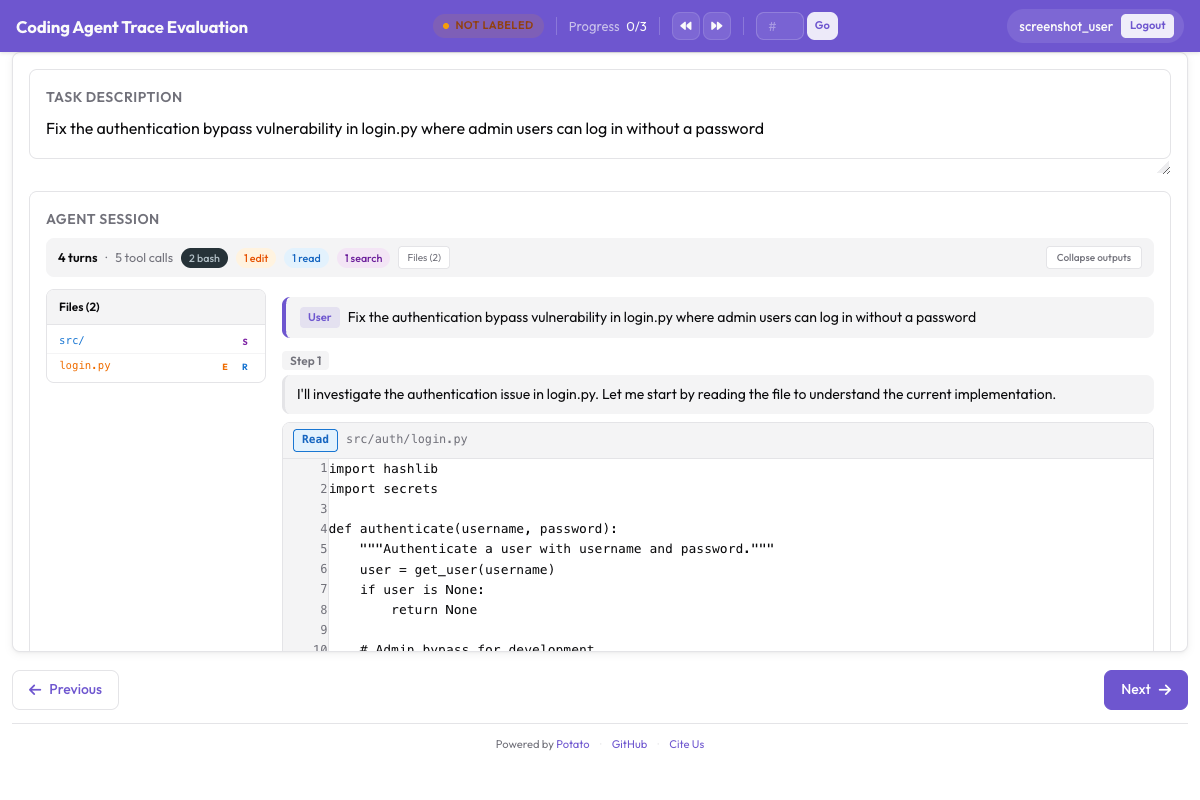

The live coding agent interface streams agent actions in real-time, showing code diffs and terminal output as the agent works:

Live coding agent observation with real-time diff rendering and terminal output

Live coding agent observation with real-time diff rendering and terminal output

Three Backends

Potato supports three backends for live coding agent observation. Each runs a coding agent within a sandboxed environment while streaming its actions to the annotation interface in real time.

Ollama (Fully Local)

The Ollama backend runs entirely on your machine with no API keys and no network calls. This is ideal for privacy-sensitive codebases or when you want to experiment without incurring API costs.

First, install Ollama and pull a model with tool-use capabilities:

# Install Ollama

curl -fsSL https://ollama.ai/install.sh | sh

# Pull a coding-capable model

ollama pull qwen2.5-coder:32b

# Verify the model is available

ollama listConfigure Potato to use the Ollama backend:

# config.yaml

project_name: "Live Agent Observation - Ollama"

port: 8000

live_coding_agent:

enabled: true

backend: "ollama"

ollama:

model: "qwen2.5-coder:32b"

host: "http://localhost:11434"

temperature: 0.2

max_tokens: 4096

num_ctx: 32768 # Context window size

sandbox:

type: "docker" # "docker" or "local"

image: "python:3.11-slim" # Base image for sandboxed execution

workspace: "./workspace/" # Agent's working directory

timeout: 600 # Max seconds per agent session

streaming:

update_interval_ms: 100 # How often to push updates to the UI

buffer_output: true # Buffer terminal output for smoother rendering

checkpoints:

enabled: true

strategy: "git" # Git-based checkpoints

auto_commit_on_file_change: true

commit_message_prefix: "[potato-checkpoint]"Anthropic API (Claude with Tool Use)

The Anthropic API backend connects to Claude models with tool use capabilities. This provides stronger reasoning and code generation than most local models, at the cost of API usage fees.

# Set your API key

export ANTHROPIC_API_KEY="sk-ant-..."# config.yaml

project_name: "Live Agent Observation - Claude"

port: 8000

live_coding_agent:

enabled: true

backend: "anthropic"

anthropic:

model: "claude-sonnet-4-20250514"

api_key_env: "ANTHROPIC_API_KEY"

max_tokens: 8192

temperature: 0.1

tools:

- "file_read"

- "file_edit"

- "bash_command"

- "directory_list"

- "file_search"

system_prompt: >

You are a coding agent. You will be given a task description and

access to a codebase. Use the provided tools to read files, make

edits, and run commands to complete the task. Think step by step

and verify your changes by running tests.

sandbox:

type: "docker"

image: "python:3.11-slim"

workspace: "./workspace/"

timeout: 900

allowed_commands: # Whitelist for bash commands

- "python"

- "pip"

- "pytest"

- "git"

- "ls"

- "cat"

- "find"

- "grep"

streaming:

update_interval_ms: 50

show_thinking: true # Show Claude's thinking in real time

checkpoints:

enabled: true

strategy: "git"

auto_commit_on_file_change: trueClaude Agent SDK (Full Claude Code Capabilities)

The Claude Agent SDK backend provides the most capable agent, with the full set of Claude Code tools and autonomous behavior. This backend requires the claude-agent-sdk package.

# Install the Claude Agent SDK

pip install claude-agent-sdk# config.yaml

project_name: "Live Agent Observation - Claude Agent SDK"

port: 8000

live_coding_agent:

enabled: true

backend: "claude_agent_sdk"

claude_agent_sdk:

api_key_env: "ANTHROPIC_API_KEY"

model: "claude-sonnet-4-20250514"

max_turns: 50 # Maximum number of agent turns

permission_mode: "auto" # "auto", "ask", or "restricted"

allowed_tools:

- "Read"

- "Edit"

- "Write"

- "Bash"

- "Glob"

- "Grep"

restricted_commands: # Bash commands to block

- "rm -rf /"

- "sudo"

- "curl"

- "wget"

sandbox:

type: "docker"

image: "node:20-slim"

workspace: "./workspace/"

timeout: 1200

mount_volumes:

- "./test-repo:/workspace/repo"

streaming:

update_interval_ms: 50

show_thinking: true

show_tool_inputs: true

checkpoints:

enabled: true

strategy: "git"

auto_commit_on_file_change: true

max_checkpoints: 100The Annotation Workflow

Once the server is running, the live observation workflow proceeds through a series of stages.

Starting a Session

The annotator opens the Potato interface and sees a task description input field. They paste or type the task the agent should complete, such as "Fix the failing test in tests/test_parser.py caused by the new config format" or "Add pagination support to the /api/users endpoint."

# Start the server

potato start config.yaml -p 8000The annotator clicks "Start Agent" and the coding agent begins working. Each action appears in real time in the CodingTraceDisplay panel.

Watching the Agent Work

As the agent works, the annotator sees each step appear in the trace viewer:

- Thinking steps appear as collapsible gray blocks showing the agent's reasoning.

- File reads appear as syntax-highlighted code blocks with line numbers and the file path.

- File edits appear as unified diffs with red/green highlighting.

- Terminal commands appear as dark terminal blocks with the command, output, and exit code.

- File tree updates in the sidebar as files are created, modified, or read.

A progress indicator at the top shows the current step number and elapsed time. The agent's status is shown as "Thinking...", "Editing file...", "Running command...", etc.

Pause and Instruction Controls

At any point during agent execution, the annotator can interact using the control bar:

Pause: Freezes the agent after its current step completes. The agent does not proceed to the next step until resumed. Use this to carefully examine a diff or terminal output before the agent moves on.

Send Instruction: While paused (or even while running), type a natural language message that gets injected into the agent's context. For example: "Don't modify the database schema, use a migration instead" or "Check the error log at /var/log/app.log before making changes."

Resume: Continues agent execution after a pause.

Stop: Terminates the agent session entirely. The trajectory up to this point is saved.

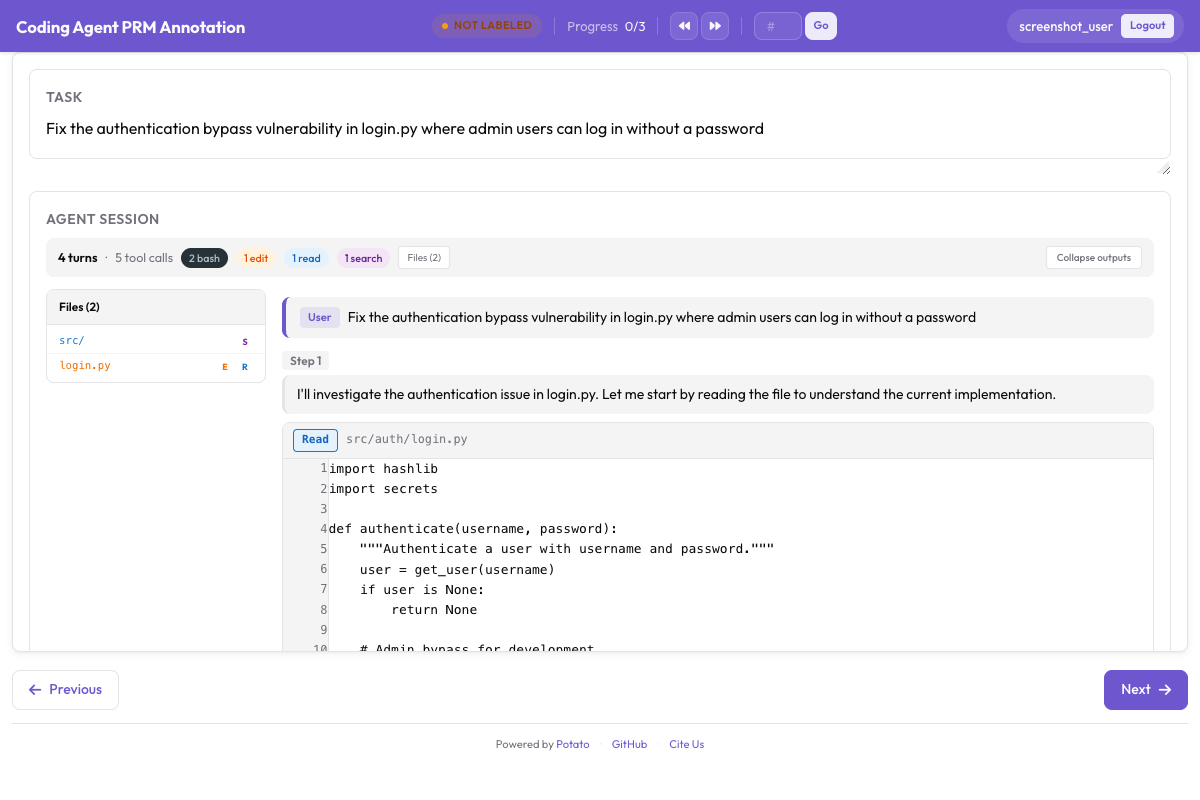

Annotators can evaluate the agent's work using PRM annotation alongside the trace display:

PRM annotation interface for step-level correctness labeling alongside the coding trace

PRM annotation interface for step-level correctness labeling alongside the coding trace

# Control bar configuration

live_coding_agent:

controls:

pause_enabled: true

instruction_enabled: true

stop_enabled: true

rollback_enabled: true

branch_enabled: true

pause_keyboard_shortcut: "Space"

instruction_keyboard_shortcut: "i"Git-Based Checkpoint System

The checkpoint system is the foundation of Potato's live observation capabilities. It enables rollback, branching, and trajectory export by creating a git commit after every file change the agent makes.

How It Works

When a live observation session starts, Potato initializes a git repository in the sandbox workspace (or uses the existing one). After every file edit the agent performs, Potato automatically creates a commit with a structured message:

[potato-checkpoint] Step 7: Edit src/parser.py

- Modified lines 45-52

- Agent reasoning: Fix the regex pattern to handle escaped quotes

This creates a linear history of commits that exactly corresponds to the steps in the trajectory. Each checkpoint captures the full state of the workspace at that point.

# You can inspect checkpoints directly with git

cd workspace/

git log --oneline

# Output:

# f8a2c1d [potato-checkpoint] Step 12: Edit tests/test_parser.py

# 3b7e9f0 [potato-checkpoint] Step 10: Edit src/parser.py

# a1c4d8e [potato-checkpoint] Step 8: Edit src/parser.py

# 9e2f6b3 [potato-checkpoint] Step 5: Edit src/config.py

# 7d0a3c1 [potato-checkpoint] Step 0: Initial stateRollback

The annotator can click "Rollback" and select any previous checkpoint from a dropdown. Potato resets the workspace to that state using git checkout and rewinds the trajectory display to match. The agent resumes from that point with its context trimmed to the corresponding step.

This is useful when an annotator sees the agent go down a wrong path. Instead of letting it continue and waste time, they can rewind to the last good state and let the agent try again (possibly with an instruction to guide it differently).

Branching Trajectories

Branching extends rollback by preserving both paths. When the annotator rolls back and the agent takes a different approach, Potato creates a named branch in git and tracks both trajectories:

Step 0 → Step 1 → Step 2 → Step 3 → Step 4 (Branch A: original path)

↘

Step 3' → Step 4' → Step 5' (Branch B: after rollback)

The annotator can create multiple branches from any checkpoint, producing a tree of trajectories. This is extremely valuable for preference learning because each branch pair represents a natural preference comparison: the annotator explicitly chose to rollback because they judged Branch A to be wrong, making Branch B the preferred trajectory (at least from the branch point onward).

# Branching configuration

live_coding_agent:

branching:

enabled: true

max_branches_per_session: 10

auto_name_branches: true # "branch-A", "branch-B", etc.

require_reason_on_rollback: true # Annotator must explain why they rolled back

compare_branches_view: true # Side-by-side view of branch outcomesExport Formats

Live observation sessions produce rich trajectory data that can be exported for various training objectives.

Linear Trajectory Export

Export each branch as an independent trajectory:

potato export \

--format trajectories \

--project ./output/ \

--output ./training_data/trajectories.jsonl \

--flatten_branches true{

"session_id": "session_001",

"branch": "branch-A",

"task": "Fix the failing test in tests/test_parser.py",

"steps": [

{"step_idx": 0, "type": "file_read", "path": "tests/test_parser.py", "...": "..."},

{"step_idx": 1, "type": "thinking", "content": "The test expects..."},

{"step_idx": 2, "type": "file_edit", "path": "src/parser.py", "diff": "..."},

{"step_idx": 3, "type": "bash_command", "command": "pytest tests/test_parser.py"}

],

"human_interventions": [

{"after_step": 2, "type": "instruction", "content": "Use a migration instead"}

],

"rollback_from_step": null,

"outcome": "resolved"

}Preference Pairs from Branches

Export branch pairs as preference data for DPO/RLHF:

potato export \

--format branch_preferences \

--project ./output/ \

--output ./training_data/branch_preferences.jsonl{

"session_id": "session_001",

"task": "Fix the failing test in tests/test_parser.py",

"branch_point_step": 2,

"branch_point_reason": "Agent started modifying the wrong file",

"rejected_branch": "branch-A",

"rejected_steps": [

{"step_idx": 3, "type": "file_edit", "path": "src/wrong_file.py", "...": "..."},

{"step_idx": 4, "type": "bash_command", "command": "pytest", "exit_code": 1}

],

"chosen_branch": "branch-B",

"chosen_steps": [

{"step_idx": 3, "type": "file_edit", "path": "src/parser.py", "...": "..."},

{"step_idx": 4, "type": "bash_command", "command": "pytest", "exit_code": 0}

]

}PRM Labels from Live Observation

Combine live observation with PRM labeling. The rollback points naturally indicate first-error steps:

potato export \

--format prm_from_branches \

--project ./output/ \

--output ./training_data/prm_live.jsonlIn this export, the step where the annotator chose to rollback is automatically labeled as the first error step, and the new branch's steps are labeled as correct (since the annotator accepted them).

Code Review Datasets

Export annotator instructions and rollback reasons as code review training data:

potato export \

--format code_review \

--project ./output/ \

--output ./training_data/code_review.jsonlFull Quick Start

Here is the complete sequence to go from zero to a running live observation session with Ollama:

# 1. Install Potato with live agent support

pip install potato-annotation[live-agents]

# 2. Install and start Ollama

curl -fsSL https://ollama.ai/install.sh | sh

ollama pull qwen2.5-coder:32b

# 3. Set up a workspace with a repo to work on

mkdir -p workspace/

git clone https://github.com/example/test-project workspace/repo

# 4. Create the config file

cat > config.yaml << 'YAML'

project_name: "Live Agent Observation"

port: 8000

live_coding_agent:

enabled: true

backend: "ollama"

ollama:

model: "qwen2.5-coder:32b"

host: "http://localhost:11434"

temperature: 0.2

num_ctx: 32768

sandbox:

type: "local"

workspace: "./workspace/repo"

timeout: 600

streaming:

update_interval_ms: 100

checkpoints:

enabled: true

strategy: "git"

auto_commit_on_file_change: true

controls:

pause_enabled: true

instruction_enabled: true

rollback_enabled: true

branch_enabled: true

branching:

enabled: true

max_branches_per_session: 5

require_reason_on_rollback: true

annotation_schemes:

- annotation_type: radio

name: outcome

label: "Final outcome"

options:

- value: "resolved"

text: "Task Fully Resolved"

- value: "partial"

text: "Partially Resolved"

- value: "failed"

text: "Failed"

- annotation_type: text_input

name: notes

label: "Session Notes"

placeholder: "Key observations about agent behavior..."

required: false

output:

path: "./output/"

format: "jsonl"

export_formats:

- "trajectories"

- "branch_preferences"

- "prm_from_branches"

annotators:

- username: "observer1"

password: "observer_pw_1"

YAML

# 5. Start Potato

potato start config.yaml -p 8000

# 6. Open http://localhost:8000 in your browserAfter logging in, paste a task description like "Add input validation to the /api/users POST endpoint" and click "Start Agent." Watch the agent work, pause when something looks wrong, send instructions to guide it, and rollback to try different approaches. When the session is complete, rate the outcome and add notes.

Best Practices

Start with clear, scoped tasks. Live observation works best with tasks that take 5-15 minutes for the agent to complete. Very short tasks do not produce enough trajectory data to be useful. Very long tasks cause annotator fatigue.

Use Docker sandboxing in production. The local sandbox mode is convenient for development, but Docker sandboxing prevents the agent from accidentally modifying your host system. Always use Docker when running with untrusted agent models.

Record rollback reasons. Enable require_reason_on_rollback so that every branch point comes with a human explanation of what went wrong. These reasons are valuable training signal on their own and improve the quality of preference data.

Compare multiple backends. Run the same set of tasks with different backends (Ollama, Anthropic API, Claude Agent SDK) to generate cross-agent preference data. This is straightforward with Potato since you only need to change the backend section of the config.

Export early and often. Run exports after each annotation session rather than waiting until the end. This protects against data loss and lets you monitor data quality throughout the collection process.