intermediateaudio

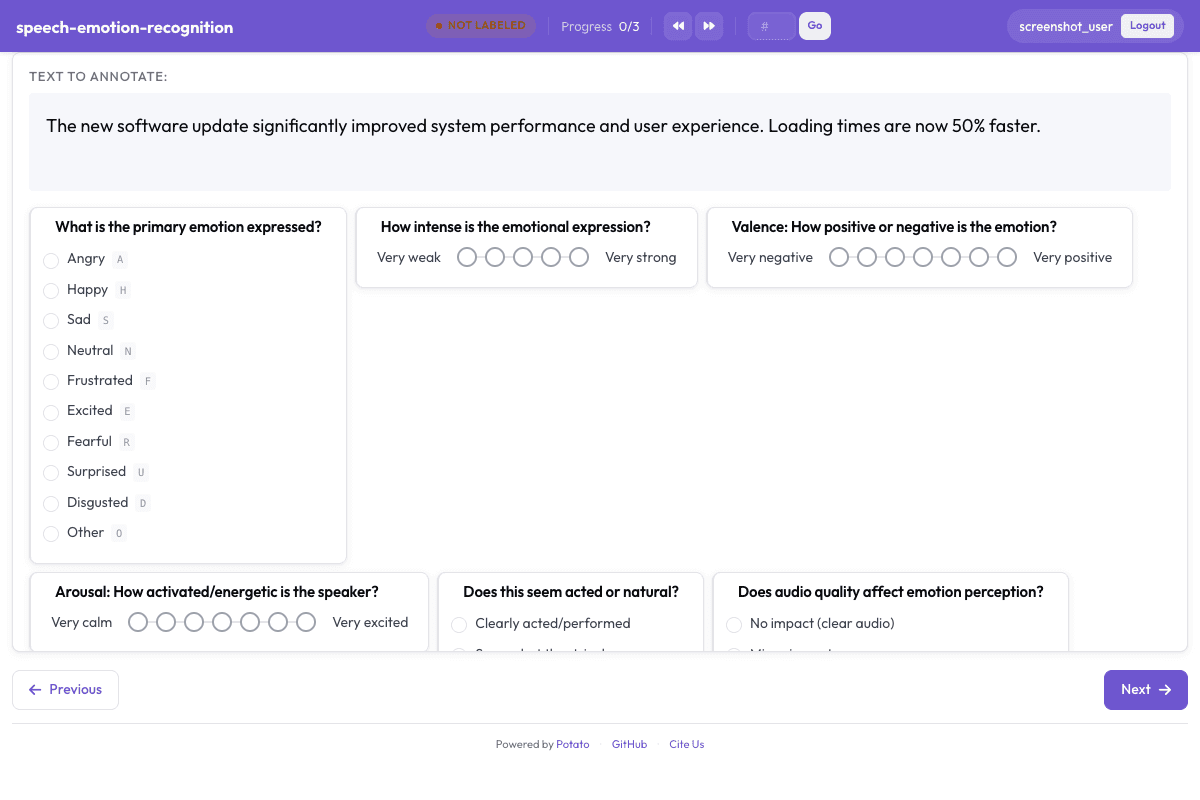

Speech Emotion Recognition

Classify emotional content in speech following IEMOCAP and CREMA-D annotation schemes.

Configuration Fileconfig.yaml

yaml

annotation_task_name: "Speech Emotion Recognition"

task_dir: "."

port: 8000

# Data configuration

data_files:

- "data/speech_clips.json"

item_properties:

id_key: id

text_key: transcript

# Annotation schemes

annotation_schemes:

# Primary emotion category

- annotation_type: radio

name: emotion_category

description: "What is the primary emotion expressed?"

labels:

- name: Angry

key_value: "a"

- name: Happy

key_value: "h"

- name: Sad

key_value: "s"

- name: Neutral

key_value: "n"

- name: Frustrated

key_value: "f"

- name: Excited

key_value: "e"

- name: Fearful

key_value: "r"

- name: Surprised

key_value: "u"

- name: Disgusted

key_value: "d"

- name: Other

key_value: "o"

sequential_key_binding: true

# Emotion intensity

- annotation_type: likert

name: intensity

description: "How intense is the emotional expression?"

size: 5

min_label: "Very weak"

max_label: "Very strong"

# Valence (positive-negative)

- annotation_type: likert

name: valence

description: "Valence: How positive or negative is the emotion?"

size: 7

min_label: "Very negative"

max_label: "Very positive"

# Arousal (activation level)

- annotation_type: likert

name: arousal

description: "Arousal: How activated/energetic is the speaker?"

size: 7

min_label: "Very calm"

max_label: "Very excited"

# Speaking style

- annotation_type: radio

name: speaking_style

description: "Does this seem acted or natural?"

labels:

- Clearly acted/performed

- Somewhat theatrical

- Natural/spontaneous

- Cannot determine

# Audio quality impact

- annotation_type: radio

name: quality_impact

description: "Does audio quality affect emotion perception?"

labels:

- No impact (clear audio)

- Minor impact

- Significant impact

- Cannot judge emotion due to quality

# Confidence

- annotation_type: likert

name: confidence

description: "Confidence in your emotion judgment"

size: 5

min_label: "Uncertain"

max_label: "Very certain"

# User settings

require_password: false

# Output

output_annotation_dir: "annotation_output/"

output_annotation_format: "json"

Get This Design

This design is available in our showcase. Copy the configuration below to get started.

Quick start:

# Create your project folder mkdir speech-emotion-recognition cd speech-emotion-recognition # Copy config.yaml from above potato start config.yaml

Details

Annotation Types

radiolikert

Domain

AudioSpeech

Use Cases

emotion recognitionaffective computingspeech analysis

Tags

audioemotionspeechiemocapaffective computing

Related Designs

Audio Transcription Review

Review and correct automatic speech recognition transcriptions with waveform visualization.

likertmultiselect

Audio-Visual Sentiment Analysis

Rate sentiment in speech segments following CMU-MOSI and CMU-MOSEI multimodal annotation protocols.

likertradio

Speech Intelligibility Rating

Rate speech intelligibility for pathological speech following TORGO database annotation protocols.

likertradio